RESEARCH PROJECTS

Multimodal

-

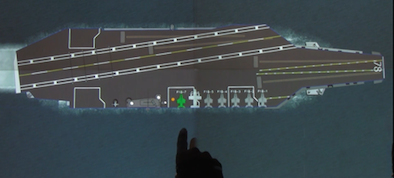

Digital Ouija Board for Aircraft Carrier Operations

Project Lead: Kojo Acquah

This project explores multimodal interaction in the context of aircraft carrier deck operations. This digital aircraft carrier Ouija Board has a basic understanding of deck operations and can aid Deck Handlers in carrying out common tasks. The system interprets a combination of speech and gestures in real time to initiate actions on deck, while responding with its own synthesized speech and graphics.

-

Sketch Programming

Project Lead: Andrew Correa

In this project we are building a sketch-and-speech prototyping tool that translates programmers' high-level graphical insights to low-level code, avoiding an otherwise lengthy and error-prone coding process.

Gesture

-

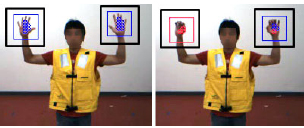

Gesture Interaction with Unmanned Vehicles

Project Lead: Yale Song

This project explores gesture-based interaction between humans and unmanned vehicles on the aircraft carrier deck environment. Based on estimated hand and body poses, the objective is to extract meaningful gestures from a sequence of camera captured image frames.

-

Bare Hand Continuous Gesture Recognition for Natural Multimodal Interaction

Project Lead: Ying Yin

In this project, we are developing a real-time 3D hand gesture recognition system for large display (horizontal or vertical) interaction. Using a Kinect, we are able to track the fingertips of bare hands in real-time. The gesture-based interactive interface allows users to do both path and pose gestures seamlessly, and responds to continuous and discrete flow gestures promptly and appropriately.

Completed Projects

- Real-time Continous Hand Gesture on a Tabletop: Gesture recognition with a colored glove and a commercial webcam

Sketch

-

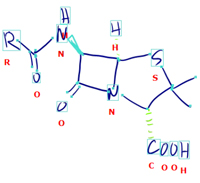

ChemInk

Project Lead: Tom Ouyang

ChemInk is a new sketch recognition framework for chemical structure drawings that combines multiple levels of visual features using a jointly trained conditional random field. The result is a recognizer that is better able to handle the wide range of drawing styles found in messy freehand sketches. A preliminary user study also showed that participants were on average over twice as fast using our sketch-based system compared to ChemDraw, a popular CAD-based tool for authoring chemical diagrams.

-

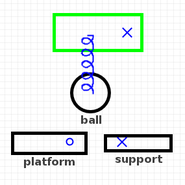

PhysInk

Project Lead: Jeremy Scott

PhysInk is a physics-enabled sketching app for tablets and digital whiteboards that lets you quickly create animations of physical structure and behavior. You can sketch a device's parts in 2D, then move these parts to demonstrate the device's behavior. PhysInk understands 2D physics with the help of the Box2D physics engine. It also understands causality, so it can generate a simulation of your device in action.

-

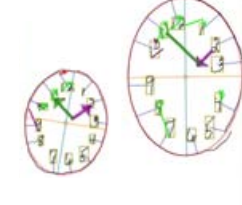

Digital Clock Drawing Test

Project Lead: Randall Davis