Download

Full Dataset

Register here to download the ADE20K dataset and annotations. By doing so, you agree to the terms of use.

Toolkit

See our GitHub Repository for an overview of how to access and explore ADE20K.

Scene Parsing Benchmark

Scene parsing data and part segmentation data derived from ADE20K dataset could be downloaded from MIT Scene Parsing Benchmark.

Terms of Use

See ADE20K's dataset Terms of Use

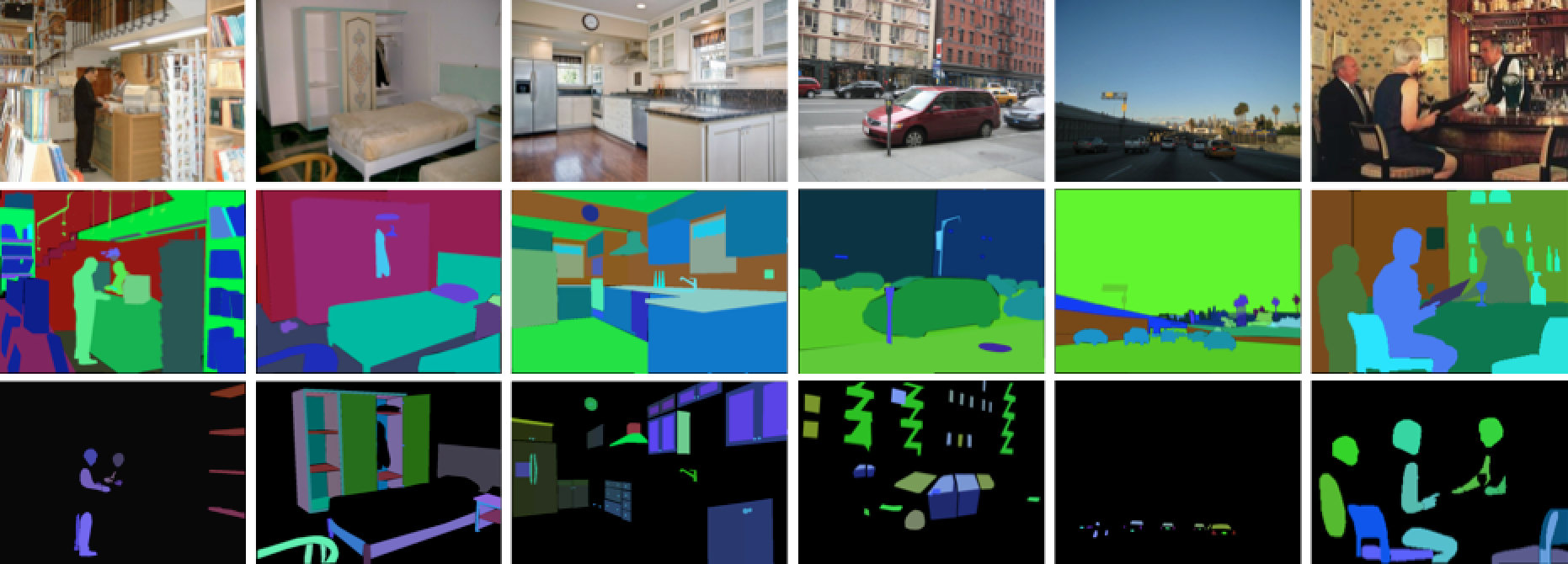

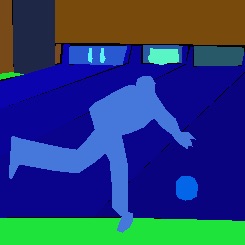

Training set

All images are fully annotated with objects and, many of the images have parts too.

Validation set

Fully annotated with objects and parts