6.837 F98 Lecture 16: November 10, 1998

(Thanks to John Alex for help preparing these slides.)

Administrative

Visibility

Our trip through the graphics pipeline is nearly complete.

We now know how to transform,

clip, and project polygons into NDC

(Canonical

coordinates, normalized device coordinates)

But we only recently addressed

what to do when scene primitive is invisible;

that

is, when it contributes no pixels to the rendered framebuffer.

A scene primitive can be invisible for three reasons:

Second example of visibility computation: back-face elimination

Q. How can we

implement this?

Idea: compare view direction, face normal

for each face F of each object

N = outward-pointing

normal of F

if N

dot V > 0, throw away the face

Can we apply this "normal test" anywhere in the pipeline?

When should back-face

culling be used?

What is its cost for

a collection of n polygons?

Back-face culling is an example

of a criterion that can be applied anywhere

along the pipeline:

in world coords, eye coords, NDC, even in screen space !

Where is the best place in the pipeline to apply back-face culling?

What portion of the scene will it tend to eliminate, on average?

Suppose we clip our scene

to the frustum, then remove all back-facing polygons.

Are we done? That

is, does this solve our visibility problem?

No! For most

interesting scenes and viewpoints, some polygons will overlap;

somehow, we must determine

which portion of each polygon is visible to eye.

One possibility: simply

draw the polygons in the "right order" so that the

right picture results (example:

blue, then green, then peach). So is

visibility just a back-to-front

sorting problem?

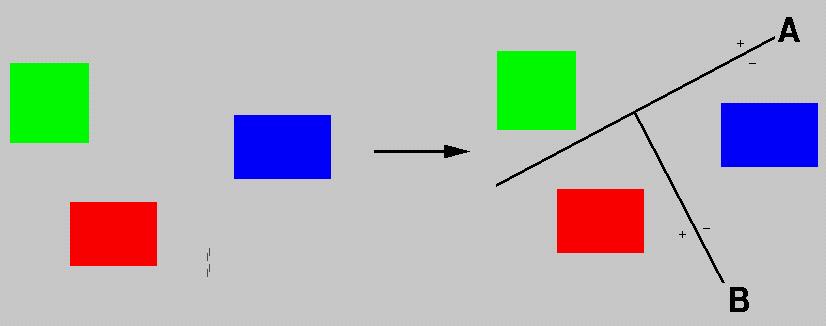

In 2-D, for non-intersecting

line segments, yes -- visibility reduces to sorting.

However, in 3-D even non-intersecting polygons can form a cycle (above),

which has no valid visibility order!

Early algorithms for visibility

(now called "analytic" algorithms) computed the

set of visible fragments

directly, in object space, then rendered the fragments

to a pen plotter, or to

a vector display, or (a bit later on) to a framebuffer.

What is the cost of describing

(and computing) the fragment map at right (above)

for a scene composed of

n

polygons (say, triangles or quadrilaterals)?

... It's quadratic in n !

Now, as soon as renderers

were invented, people started crafting geometric models.

Realism called for lots

of polygons, which spelled trouble for these costly algorithms.

So, for about a decade (from

the late '60s to the late '70s) there was intense interest

in finding efficient algorithms

for efficient hidden surface removal.

There's quite an extended

literature about this; today I'll show just two nifty algorithms, one

called BSP traversal

from 1979/80, and one called Warnock's algorithm, from 1968/69.

BSP traversal is one of a class of "list-priority" visibility algorithms

Building a tree from

non-polygonal objects

choose

split planes that "well-separate" objects from one another:

Dynamic query: Produce

ordered polygon list from the tree, instantaneous eyepoint

node::draw (viewpoint)

BSP traversal is called a

"hidden surface elimination" algorithm, but it doesn't

really "eliminate" anything;

it simply orders polygons so the resulting

picture is correct.

Can we always find a polygon

ordering (without fragments) that

gives the right rendered

image?

... Nope !

What are the time and space

requirements for a BSP tree of n polygons?

What is the cost of a query

for a single eyepoint?

Next: Warnock's algorithm

(1969). This is an elegant hybrid of object-space

and image-space computations,

based on a simple idea that has recurred

over and over again in computer

graphics: If the situation is too complex,

subdivide.

0) start with a "root viewport",

and list of all scene primitives

(transformed

to screen-space)

Then, recursively:

1) classify each object as incident, or outside of, viewport

2) if number of objects is

zero or one,

visibility

is established for this portion of the screen

else

subdivide

into smaller frusta, distribute incident objects to them, and recurse.

And how is the correct surface identified in this case?

Third example of a visibility operation is z-buffering (or depth buffering).

Both of the above algorithms

were proposed when memory was very expensive

(the first 512 x 512 framebuffer

cost more than fifty thousand dollars).

In the mid-70's, Ed Catmull

had access to a framebuffer and proposed a

radically new visibility

algorithm: z-buffering. The idea of z-buffering is

to resolve visibility

independently at each pixel.

At beginning of frame, initialize

all pixel depths to largest integer (distant background)

Properties of depth-buffer:

Previous Meeting .... Next Meeting ... Course Page

Last modified: Nov 1998

Prof. Seth Teller, MIT Computer Graphics Group, seth@graphics.lcs.mit.edu